* read_config():

Reading default configuration from /etc/masterha_default.cnf..

Reading application default configuration from /etc/app1.cnf..

Reading server configuration from /etc/app1.cnf..

* connect_all_and_read_server_status:

connect_check: 首先进行connect check,确保各个server的MySQL服务都正常

connect_and_get_status:

获取MySQL实例的server_id/mysql_version/log_bin..等信息

通过执行show slave status,获取当前的master节点。如果输出为空,说明当前节点是master节点( 0.56已经不是这么判断了,已经支持multi master)

validate_current_master:取得master节点的信息,并判断配置的正确性

check是否有server down,若有则退出rotate

check master alive or not,若dead则退出rotate

check_repl_priv:

查看用户是否有replication的权限

获取monitor_advisory_lock,以保证当前没有其他的monitor进程在master上运行

执行:SELECT GET_LOCK('MHA_Master_High_Availability_Monitor', ?) AS Value

获取failover_advisory_lock,以保证当前没有其他的failover进程在slave上运行

执行:SELECT GET_LOCK('MHA_Master_High_Availability_Failover', ?) AS Value

check_replication_health:

执行:SHOW SLAVE STATUS来判断如下状态:current_slave_position/has_replication_problem

其中,has_replication_problem具体check如下内容:IO线程/SQL线程/Seconds_Behind_Master(1s)

get_running_update_threads:

使用show processlist来查询当前有没有执行update的线程存在,若有则退出switch

$self->validate_current_master():

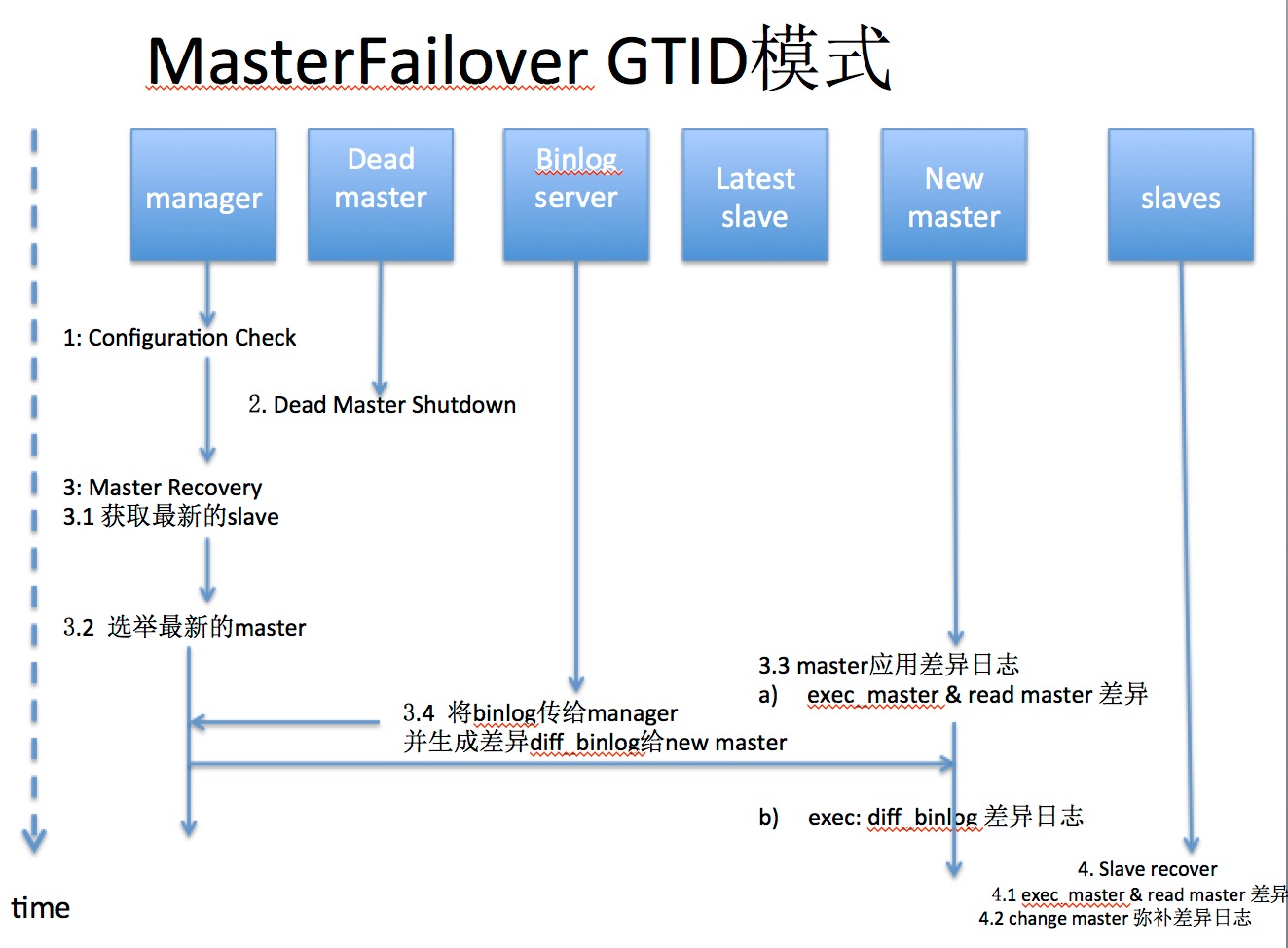

检查是否是GTID模式